It was announced today at the GTC 2019 that the cloud-based NVIDIA DRIVE Constellation simulation platform is now available and can enable driving in virtual environments in an efficient and practical manner.

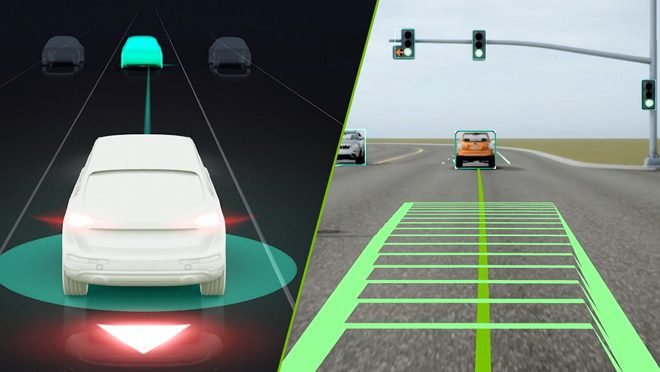

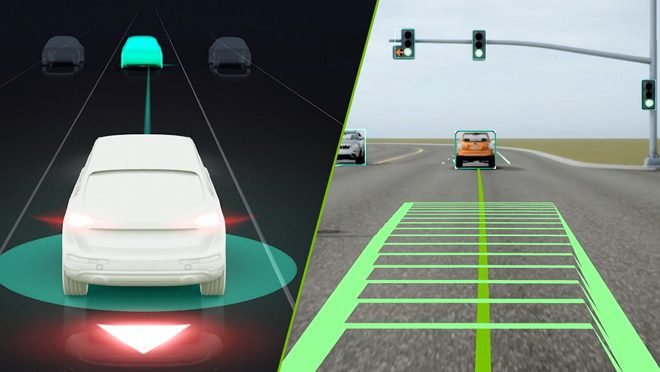

The data center solution that the DRIVE Constellation provides is “a closed loop process with bit-accurate, timing-accurate, hardware-in-the-loop testing” by comprising two servers: the DRIVE Constellation Simulator that generates the sensor output from the virtual car, and the DRIVE Constellation Vehicle that incorporates the DRIVE AGX Pegasus AI car computer which receives sensor data and sends vehicular control commands back to the simulator.

These two side-by-side servers facilitate bit-accurate, real-time simulation, and an efficiency that can furthermore help DRIVE Constellation achieve substantial driving experience, almost 3,000 units for over 1 billion miles per year.

What’s more, each mile driven in DRIVE Constellation includes interesting events, even rare and hazardous ones.

While announcing the DRIVE Constellation platform, NVIDIA CEO Jensen Huang gave a demonstration of the “seamless workflow” manner in which the platform performs driving tests and delivers results:

1. It is a Cloud-Based, End-to-End Workflow, which means the following

1. Users can remotely access the platform anywhere via the cloud.

2. Specific simulation scenarios can be submitted by developers. Moreover, developers can also set specific evaluators to determine the performance of the autonomous vehicle.

3. Conducting parallel runs of the same test with various scenarios can demonstrate the platform’s large-scale validation capability. Moreover, multiple DRIVE Constellation units can run various simulation tests in parallel.

2. It is an open platform, which means the following:

1. The DRIVE Constellation provides a programming interface that permits the integration of environment models, vehicle models, sensor models, and traffic scenarios by DRIVE Sim ecosystem partners. Comprehensive, diverse, and complex testing environments can be generated by merging various partners.

2. Cognata, an Israel-based simulation company, has used global-scale real-world data captured by traffic cameras to create accurate traffic and scenario models, which are supported by the DRIVE Constellation platform and can also aid developers to create their own scenarios.

4. With the help of ON Semiconductor, a supplier of semiconductors and sensors, camera, lidar, and radar are supported by DRIVE Constellation to aid simulation through accuracy.

5. Moreover, third-party and regulatory autonomous vehicle standards can also be determined using the platform.

3. Rigorous testing

DRIVE Constellation can test their AVs in all types of simulated conditions and provide necessary validation so that ultimately, the vehicles can operate through different scenarios “seamlessly.”

As a part of an extended partnership between NVIDIA and Toyota, the Toyota Research Institute-Advanced Development (TRI-AD) will leverage the DRIVE Constellation and make use of the platform to facilitate autonomous vehicular development and production. It can simulate extensive driving in challenging scenarios with greater efficiency, cost-effectiveness, and safety (as compared to the real world).

Furthermore, NVIDIA founder and CEO Jensen Huang have also announced the NVIDIA DRIVE AP2X- a Complete Level 2+ Autonomous Vehicle Platform.

The DRIVE AP2X is an automated driving solution that incorporates DRIVE AutoPilot software, DRIVE AGX, DRIVE validation tools, DRIVE AV autonomous driving software, and DRIVE IX intelligent cockpit experience.

Each component runs on the energy-efficient NVIDIA Xavier SoC that makes use of DriveWorks acceleration libraries and a real-time operating system called the DRIVE OS.

The DRIVE AP2X Software 9.0 comprises deeper neural networks, facial recognition capabilities, and additional sensor integration options, and will be released in the next quarter of the year.

1. Using MapNet, the DRIVE AP2X software would provide enhanced mapping and localization. It will also provide greater accuracy and safety through a suite of three distinct path planning DNNs.

2. ClearSightNet would enable the AV to be aware of sensor obstructions and take necessary actions.

3. Facial recognition can enable driver monitoring and car functions and adjustments.

Furthermore, the DRIVE AP2X includes the DRIVE Planning and Control software layer as part of its DRIVE AV software suite, comprising a route planner, a lane planner, and a behavior planner. It comprehends sensor data, determines corresponding actions, and analyses and predicts ambiance dynamics in order to facilitate a safe and comfortable driving experience through the NVIDIA Safety Force Field (SFF).

NVIDIA DRIVE AP2X can thus assist the coming of AI-powered driving to roads, thanks to its complete Level 2+ automated driving solution.